What Is an AI Agent? A Plain English Explanation

You've probably seen the word "agent" used a lot lately. AI companies use it constantly. Microsoft has agents. Anthropic has agents. Every product announcement seems to mention them.

But the explanations are often vague or buried in technical language. So let's fix that.

This post explains what AI agents actually are, how they differ from regular AI tools like ChatGPT, and why the distinction matters. In plain English, with real examples.

The simplest definition

A regular AI tool, like a chatbot, answers questions. You ask something, it responds. One question, one answer. The conversation ends.

An AI agent does something more: it takes a goal and works toward it across multiple steps, using tools, making decisions along the way.

That's the core difference. Chatbots respond. Agents act.

Here's a concrete example to make this stick.

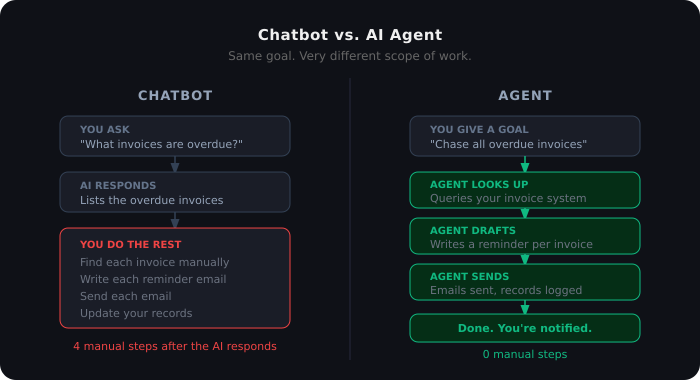

Chatbot version: You ask, "What invoices are overdue this month?" The AI tells you. You then go manually find those invoices, send reminder emails, and update your records.

Agent version: You say, "Chase up all overdue invoices." The agent looks up which invoices are overdue, drafts reminder emails for each one, sends them, and logs what it did, without you touching anything.

Same starting point. Very different scope of work.

What makes something an "agent" technically

When researchers and engineers use the term, an AI agent has three core properties:

1. It perceives its environment The agent can read information from files, databases, emails, websites, or APIs. It's not just working from what you typed in the chat. It actively goes and gets context.

2. It uses tools This is what separates agents from chat. An agent can call external tools: search the web, read a document, run a script, update a spreadsheet, send a message. A chatbot can only output text.

3. It acts over multiple steps An agent doesn't just produce one response and stop. It works through a sequence of steps, checking its progress and adjusting as it goes. Step 1 might inform what happens in Step 3.

Anthropic's definition is straightforward: an agent is an LLM (a language model) that uses tools in a loop. It runs, uses a tool, gets a result, runs again, uses another tool, until it's done.

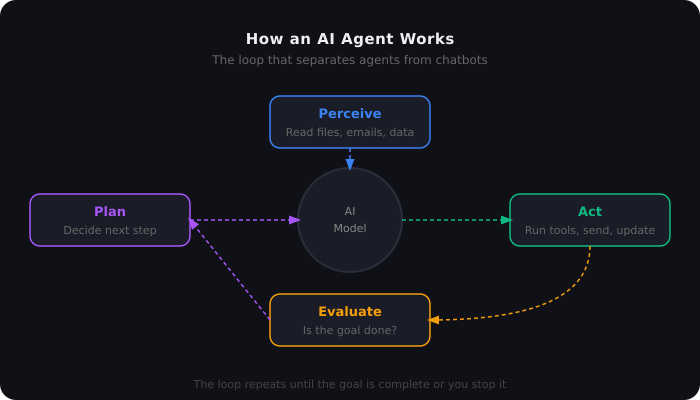

Agent Loop

A circular flow diagram illustrating how an AI agent works. Four nodes — Perceive (reads files, emails, data), Plan (decides next step), Act (runs tools, sends, updates), and Evaluate (checks if the goal is done) — connect through a central AI Model hub. Dashed arrows show the loop repeating until the task is complete.

A real example: how an agent processes a support ticket

Here's what the loop looks like in practice.

Say you've built a support ticket agent. A customer emails in saying their order hasn't arrived.

The agent reads the email (perceives)

It searches your order management system for that customer's order (tool: database lookup)

It finds the order, marked as shipped, but tracking shows delayed

It checks your policy document for how to handle shipping delays (tool: document search)

It drafts a reply explaining the delay and offering a discount code (tool: email draft)

Depending on how you've configured it, it either sends the email or queues it for your review

That whole sequence happened without you doing anything after step zero. That's an agent.

How this is different from regular automation

You might be thinking: "That sounds like a workflow. I can build that in Power Automate or Zapier."

You can, and there's overlap. But there's an important difference.

Traditional automation is deterministic. Every step is pre-defined. If the email contains X, do Y. If the invoice amount is above Z, notify this person. The rules are fixed. The path is fixed. When something falls outside the expected parameters, the automation fails or gets stuck.

An agent handles variation. If the customer's email is angry, the agent can adjust its tone. If the order system returns an unexpected status code it hasn't seen before, it can reason about what to do. It doesn't just match patterns. It understands context.

This is why Microsoft's own documentation uses an invoice example to explain the gap: a Power Automate flow fails when an invoice has an unexpected vendor address. A Copilot Studio agent reads the invoice, notices the address change, reasons that it's likely a legitimate vendor update, and asks a human to confirm before proceeding. Same trigger, very different handling.

Neither approach is always better. Deterministic automation is faster, more reliable, and cheaper for tasks that never vary. Agents are better for tasks that require judgment.

What agents can and can't do today

It's worth being honest about where things actually stand.

What agents are good at right now:

Tasks that involve gathering information from multiple sources

Tasks that are hard to pre-define because inputs vary

Research tasks: finding, summarising, and synthesising information

Drafting content based on context they've gathered

Routing and triage decisions (e.g., which support tier should handle this?)

Where agents still struggle:

Tasks that require genuine real-time judgment in high-stakes environments (medical decisions, legal advice, financial trades)

Long-running tasks over many hours without any human checkpoints

Tasks where the cost of errors is high. Agents make mistakes, and those mistakes can cascade.

Anything requiring deep proprietary knowledge they haven't been given access to

Anthropic's own engineering team notes that agents use roughly four times more tokens than regular chat interactions, and multi-agent systems use about fifteen times more. More capability comes with more cost. You want to reach for agents when the task is complex enough to justify it.

The difference between an AI agent and an AI assistant

People sometimes use these interchangeably. They're different.

An AI assistant responds to you. You're in the loop for every step. It helps you think, draft, and decide, but you're the one taking action.

An AI agent acts on your behalf. You give it a goal and it works toward it. You're not necessarily in the loop for each step.

Claude in a chat window is an assistant. Claude Code, given a task to refactor a codebase, running scripts and editing files over the course of an hour, is behaving as an agent.

The same model can be used both ways. What makes something an agent is the setup: the tools available to it, the level of autonomy you've given it, and whether it's completing tasks or just helping you complete them.

Chatbot vs. Agent

A split diagram comparing how a chatbot and an AI agent handle the same goal: chasing overdue invoices. On the left, the chatbot answers the question and leaves four manual steps to the user. On the right, the agent looks up invoices, drafts reminders, sends emails, and logs the outcome automatically, with zero manual steps after the initial instruction.

Why this matters right now

The reason "agents" is the word of 2025 is that the tooling to build them has become genuinely usable.

Eighteen months ago, building a reliable agent required significant engineering. You needed to handle tool calling, error recovery, state management, and output parsing yourself. Most agents failed or hallucinated their way into errors within a few steps.

The infrastructure has improved significantly. Claude Code, Copilot Studio, and similar platforms now handle the plumbing. You define the tools and the goal. The platform handles the loop.

This means agents are moving from research demos into real business use. Companies are using them for customer support triage, document processing, code review, research synthesis, and internal operations.

It also means it's a good time to understand what they are before everyone around you claims to be building them without knowing what they're building.

A quick glossary of terms you'll see

As you go deeper into this topic, you'll run into related terms. Here's what they actually mean:

LLM (Large Language Model): The AI model itself. Claude, GPT-4, Gemini. The "brain."

Tool / Function calling: A mechanism that lets an LLM trigger actions: run a search, read a file, call an API. This is what turns a chatbot into an agent.

Orchestrator: In a multi-agent system, the orchestrator is the lead agent that plans and delegates tasks to other agents.

Subagent: An agent that handles a specific part of a larger task, reporting back to the orchestrator.

MCP (Model Context Protocol): An open standard for connecting AI agents to external tools and data sources. We cover this in the next post in this series.

Agent Skills: A newer concept: reusable instruction sets that teach an agent how to handle specific tasks. More on this in Part 3.

What this series covers

This post is the foundation. From here, the series builds:

Part 2: What is MCP, and why is every AI platform adopting it?

Part 3: What are Agent Skills, and how do they give Claude persistent knowledge?

Part 4: Power Automate as an agent platform: what's already possible

Part 5: Agent Skills vs. MCP: when to use which

Part 6: Claude vs. Copilot Studio: an honest comparison from someone who uses both

If you work in automation, software development, or enterprise operations, agents are going to affect how your work gets done. These posts are designed to give you a clear, practical foundation, without the hype.

Frequently Asked Questions

What is the simplest definition of an AI agent? An AI agent is a system that takes a goal and works toward it across multiple steps, using tools to take actions, rather than just answering questions in a chat.

What's the difference between a chatbot and an AI agent? A chatbot responds to what you type. An AI agent can use external tools, run sequences of steps, and complete tasks on your behalf without you doing each step manually.

Are AI agents reliable? They're improving rapidly, but they make mistakes. The best practice is to keep humans in the loop for high-stakes decisions, and to review agent outputs before they trigger irreversible actions.

Do I need to code to use AI agents? Depends on the platform. Copilot Studio allows non-developers to build agents through a low-code interface. Claude Code is designed for developers. Most enterprise platforms offer both options.

What does "agentic AI" mean? Agentic AI refers to AI systems that operate with some degree of autonomy. They perceive their environment, use tools, and act across multiple steps toward a goal. It's the same concept as AI agents, just used as an adjective.